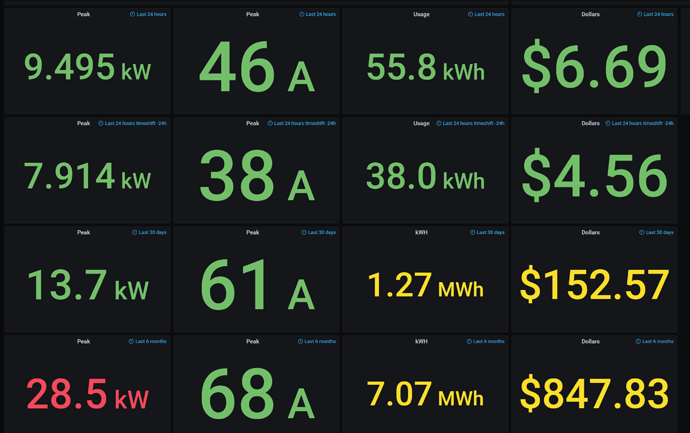

From browsing the forums here I’ve gathered that the Graph+ implementation is doing grouping/averaging before plotting or doing the data statistics (something which I did not notice was happening / changing automatically based on the start/end time range selected). This makes the graph and metrics quite inaccurate and misleading. For example:

“What is the peak draw of this device?”

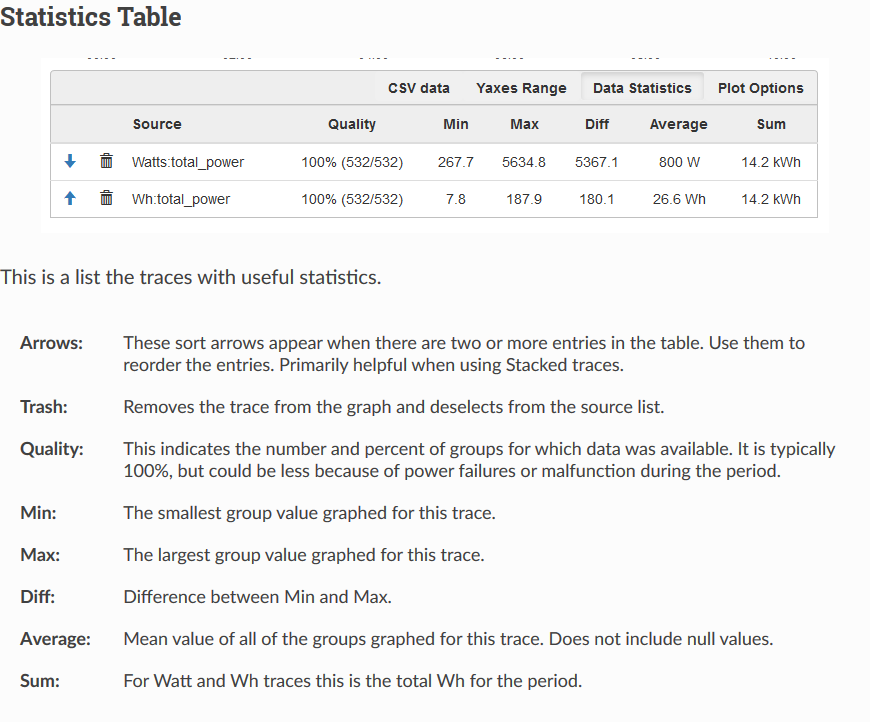

If you happen to select a period covering several days, you might get a “Max” value of 985.7 watts. But if you zoom in on a random peak of that graph, then you suddenly get a “Max” of 1300.3 watts. That’s a massive difference, on the order of a 25% increase, and can lead to very incorrect assumptions about the power draw of devices.

I’m sure the grouping is done to increase the speed of graphing and reduce the cpu/memory overhead for large selections. That said, if the ‘group’ is anything larger than 5 seconds, then the term ‘Max’ ‘Min’ and ‘Diff’ are misleading and inaccurate. Is the ‘Average’ and ‘Sum/Integral’ accurate? How can anyone trust the data statistics or graph numbers?

My frustration stems from having handed out bad advice for the peak power draw of several devices due to the mishandled graphing and statistics. I bought the device to get accurate data, so when the graphing software is directly misleading, that negates a lot of the benefit and forces me to export all the data to something like InfluxDB/Grafana just to get accurate data representation. I really like the features of Graph+, and honestly prefer it to using Grafana, if only it were actually accurate over any large time period.

Can I override the ‘group’ setting and always force it to use 5 second intervals?