Hello Bob, I would appreciate your help please. Sorry, this is a bit long winded, but hopefully it contains relevant information.

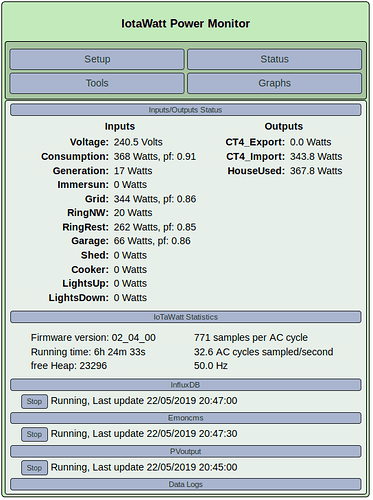

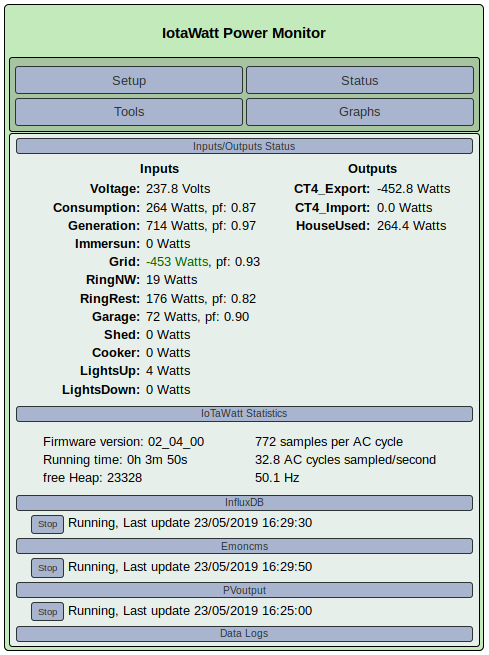

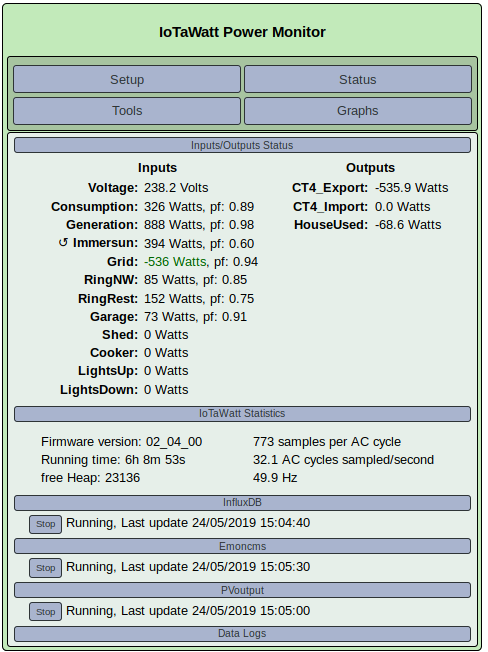

Our IoTaWatt was bought from the first available batch in the UK, along with one 20A and three 100A current transformers (circa October 2017). From this setup, the daily energy figures for solar generation, import, export, house usage, Immersun diversion (hot water) and the used solar generation are recorded on EmonCMS.org.

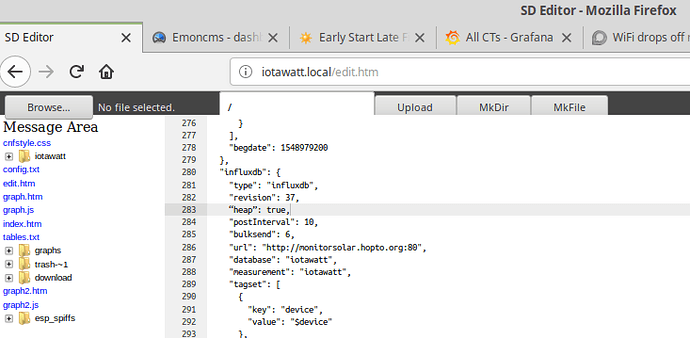

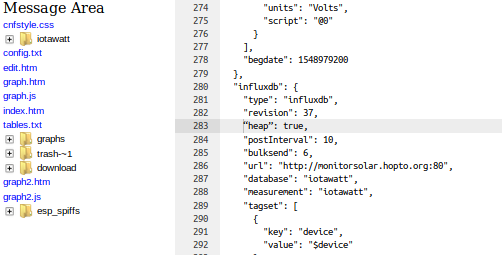

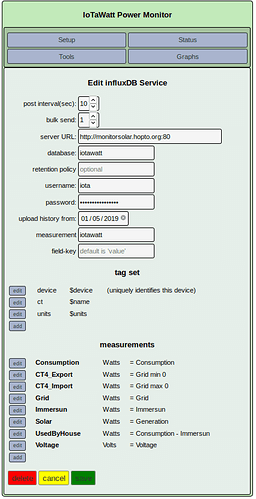

When the option to post to PVoutput became available it was successfully started in mid February 2019 (East & West 4.000kW Live Outputs). Later when posting to influxDB / Grafana option occurred, it was also initiated as an educational exercise.

On 4/29/19 @ 12:56:52z our village had an hour long Power failure followed by several short false starts.

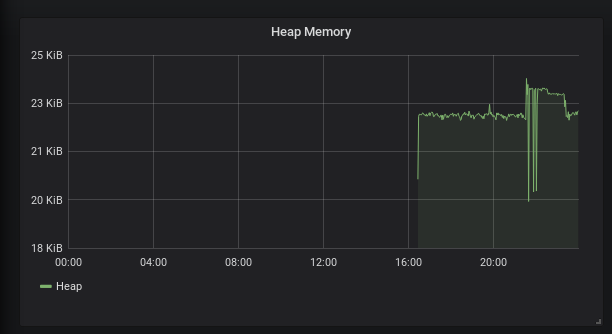

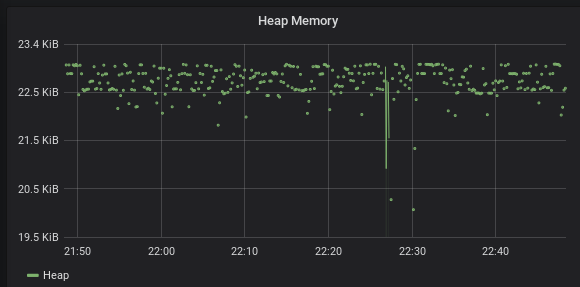

A while later it was noticed that the Running Time shown in the IoTaWatt Status window unexpectedly indicated a recent start up. Investigation of the iotamsgs.txt message log revealed that on “5/01/19 @ 08:47:22z Heap memory has degraded below safe minimum, restarting” and this problem had occurred around 28 times by 07:11:07z on 5/12/19.

IoTaWatt Message Log.txt (9.6 KB)

Copy of iotamsgs_txt.txt (546.1 KB)

In an attempt to identify the issue, several aspects were investigated:

- No extra wifi traffic found on channel 1

- No changes made to location of wifi equipment since IoTaWatt first started

- No known recent change to wifi signal strength

- The IoTaWatt unit displays a pulsating green indicator.

In case the issue related to the number of uploads being performed i.e. EmonCMS + PVOutput + InfluxDB, the following changes have been carried out and consequences noted:

a) InfluxDB posting stopped (posting to EmonCMS + PVOutput continued). The ‘Heap’ problem went away.

b) InfluxDB posting restarted. The ‘Heap’ problem reappeared.

c) PVOutput posting stopped (posting to EmonCMS + InfluxDB continued). PVOutput showed no further updates. The ‘Heap’ problem persists.

d) Posting to PVOutput started again about a day later.

Finally, from a mechanical engineer of senior years use to working with gears, threads and metals, thank you Bob and all those who have helped to create a very useful and versatile energy monitoring tool.